Exploring the posterior

Recall: simple binomial model

5 trials; 4 ‘successes’

This is the same as an intercept-only logistic distribution with a logit-transformed uniform for the prior on

Bayes' rule

Hill climbing (MAP/ML)

Hill climbing (MAP/ML)

Hill climbing (MAP/ML)

Hill climbing (MAP/ML)

Hill climbing (MAP/ML)

Hill climbing (MAP/ML)

Markov chain Monte Carlo

Markov chain Monte Carlo

Markov chain Monte Carlo

Markov chain Monte Carlo

Markov chain Monte Carlo

Markov chain Monte Carlo

Markov chain Monte Carlo

Markov chain Monte Carlo

Density based on 50,000 posterior observations

Hamiltonian Monte Carlo

Simulate a

physical system

“Energy” at any point in the parameter space is proportional to the negative log posterior probability:

Get random draws

by ‘perturbing’ a particle in that field

Place a particle in that system, give it a push in a random direction, and use Hamiltonian dynamics to simulate its motion.

Wherever the particle ends up after a fixed amount of time is the next candidate draw from the posterior.

Hamiltonian Monte Carlo

Hamiltonian Monte Carlo

HMC vs. MCMC

Takes advantage

of gradient

Gradient (slope) information helps HMC adjust to the shape of the posterior.

Reduces autocorrelation

HMC tends to explore the plausible areas of the parameter space much more quickly than ‘standard’ MCMC like Metropolis–Hastings. It is not likely to spend too much time in one small area.

“No-U-Turn sampler”

(NUTS)

A version of HMC that automatically optimizes some of the meta-parameters of the algorithm.

What can go wrong?

Autocorrelation

- Because each posterior sample depends on the previous sample, HMC usually displays some autocorrelation

- A sample of 1,000 autocorrelated samples will have less information than a sample of 1,000 independent samples

- Relevant quantity:

effective sample size (ESS)

What can go wrong?

Non-convergence

- Sampling may have trouble “converging” (properly representing the posterior)

- Many possible causes Bad model specification

Insufficient iterations

Badly tuned sampler - Diagnose with R̂ (“Rhat”) on multiple chains to check agreement between multiple chains

What can go wrong?

Divergent transistions

- HMC can ‘overshoot’ if the posterior density has sharp edges

- Common in multilevel models

- Stan (and

brms) will warn you about these - Address with re-specification of model or finer-grained steps

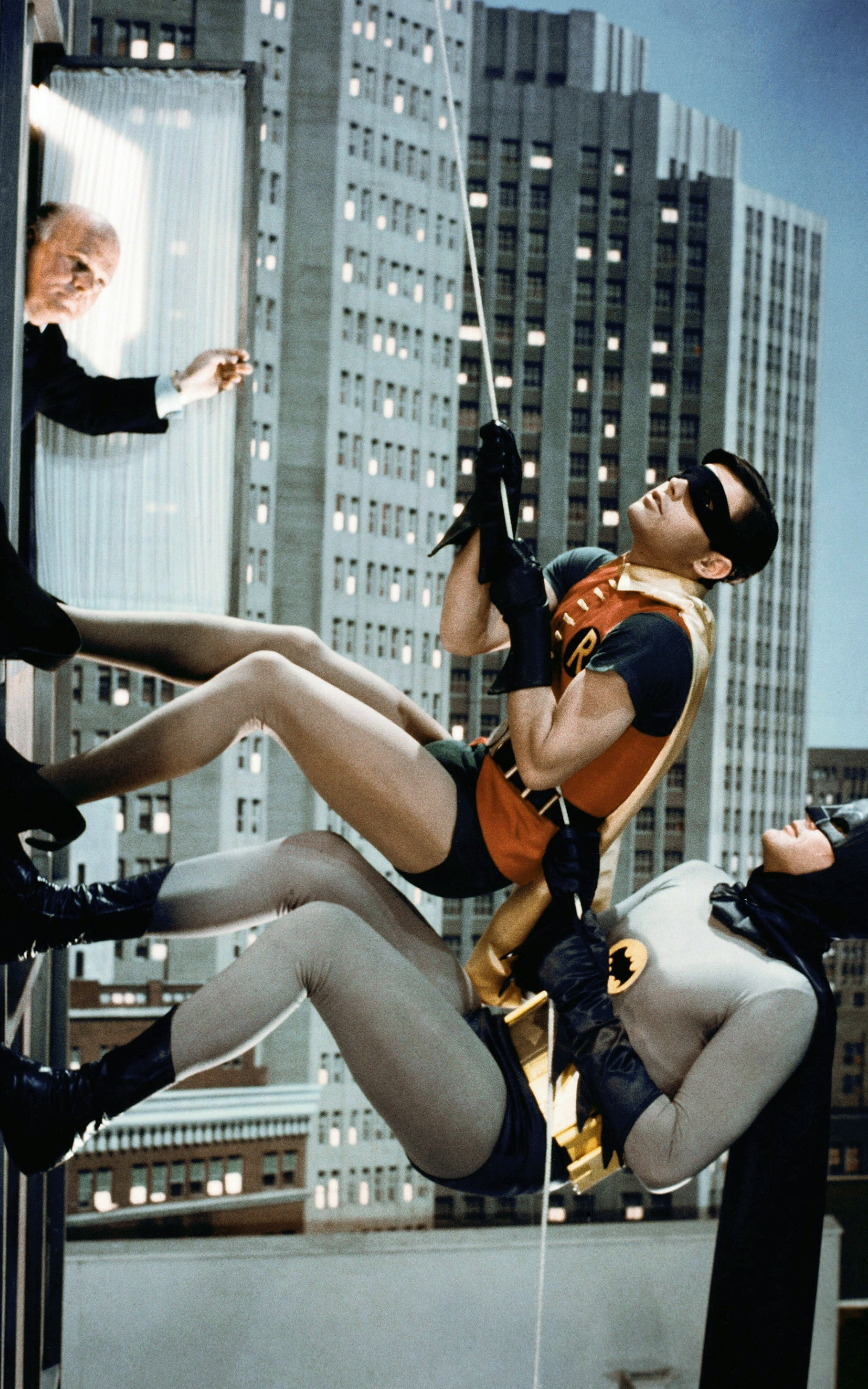

Image credit

Figures by Peter McMahan (source code)

Still from Batman (1966)

Still from The Legend of the Drunken Master (1994)

Clip from Gleaming the Cube (1989)

Clip from Raiders of the Lost Ark (1981)